Mobile A/B Testing in India: 5 Tests That Actually Move the Needle

India is 70% mobile traffic but most A/B tests are designed for desktop. Here's how to run mobile-specific experiments that improve conversion in the Indian market.

India’s internet is mobile. More than 70% of all website traffic in India comes from smartphones. Yet most A/B testing frameworks, case studies, and best practices were built for desktop-first markets in the US and Europe. (If you’re also targeting Singapore and Southeast Asia, read our Singapore A/B testing guide for SEA-specific benchmarks.)

The result: Indian brands are running desktop A/B tests on pages that 70% of their audience views on a phone — and wondering why the results don’t move.

This guide covers what’s different about mobile A/B testing in India, which tests actually work for the Indian mobile user, and how to set up a mobile-first experimentation programme from scratch.

Why Indian Mobile Conversion Rates Are Different

India’s mobile web experience has structural differences from Western markets that directly affect what you should test:

1. Network conditions vary dramatically 4G penetration is high in metro cities but 3G and variable 4G speeds are common in Tier 2/3 cities. Page load time is the #1 conversion killer in India. The average Indian user will abandon a page that takes more than 3 seconds to load. A 1-second improvement in page load time can improve conversion by 7% on Indian mobile traffic.

2. COD vs. UPI vs. card payments behave differently India’s payment psychology is unique. Cash on Delivery (COD) orders have higher abandonment rates but lower barrier to initial checkout completion. UPI payments (PhonePe, Google Pay) are fast but require context-switching. Credit/debit cards are the smallest segment. Testing payment flow copy — and which payment method to show first — is a specifically Indian opportunity.

3. First-time vs. returning user patterns are different Indian D2C customers often require more social proof on first purchase. Peer reviews, influencer mentions (“as seen with [influencer]”), and WhatsApp-based testimonials convert differently than Western-style star-rating reviews.

4. Language matters Hindi, Tamil, Telugu, Kannada, Marathi — India has 22 official languages. Even brands that only test in English are leaving conversion on the table in Tier 2 cities where vernacular interfaces significantly outperform.

The 5 Mobile A/B Tests That Move Conversion in India

Test 1: Sticky Mobile CTA Bar

The problem: On desktop, a CTA button in the hero section is always visible. On mobile, users scroll past it within seconds and there’s no persistent reminder to take action.

The test:

- Control: Standard page with CTA button in hero section only

- Variant: Sticky bottom bar that follows the user as they scroll — shows a compact version of the primary CTA

Expected impact: 15–30% improvement in CTA click-through rate on mobile

What to test in the variant: The copy on the sticky bar (“Start Free” vs. “Try ClickVariant Free” vs. “Get Started — Free Forever”), the color, and whether showing the price point helps or hurts.

Implementation: ClickVariant’s visual editor lets you add a CSS-based sticky bar without any developer involvement. Target the variant specifically to mobile visitors (screen width < 768px).

Test 2: COD-First vs. UPI-First Payment Layout

The problem: Most Indian D2C brands default to showing UPI as the first payment option because it’s fastest to process and has the lowest return rate. But COD converts at a higher rate for first-time buyers.

The test:

- Control: Checkout showing UPI as the default/first payment option

- Variant A: COD listed first (“Cash on Delivery — Pay when your order arrives”)

- Variant B: A prompt that shows the trade-off (“Choose faster UPI checkout or familiar COD”)

Expected impact: 8–15% improvement in checkout initiation rate (more users starting checkout), though UPI completion may vary.

India-specific insight (upgrowth.in, 2026): 60%+ of Indian ecommerce orders are COD. For a comprehensive guide to D2C conversion testing, see our A/B testing guide for Indian D2C brands. Even brands trying to shift to prepaid should test whether showing COD prominently as a reassurance signal — even if you’re trying to steer users toward UPI — improves overall checkout starts.

Test 3: Hindi/Regional Language Hero vs. English Hero

The problem: English-language product pages are the default for Indian startups, even when targeting Tier 2 and Tier 3 cities where Hindi or regional languages are dominant.

The test:

- Control: English-language hero section

- Variant: Hindi or regional language hero — same offer, same value proposition, different language

- Targeting: Show the variant to users whose browser language is set to Hindi/regional (use audience targeting in ClickVariant)

Expected impact: 20–40% improvement in engagement metrics (scroll depth, time on page) for regional-language users; conversion lift varies

When this test is right for you:

- More than 30% of your traffic comes from Tier 2/3 cities

- Your product has aspirational appeal in non-metro India (EdTech, D2C health, fintech)

- You have team members who can review the regional language copy (don’t rely on machine translation for hero headlines)

Test 4: Page Load Speed — Image Compression Variant

The problem: Beautiful mobile-first design with high-resolution hero images and product photos destroys conversion in areas with slower mobile data.

The test:

- Control: Standard page with current images and assets

- Variant: Aggressively compressed images (WebP format, 60–70% quality), deferred non-critical JavaScript, smaller hero image for mobile viewports

Expected impact: 5–15% improvement in conversion rate for users in areas with network latency — with no design change, just performance

How to measure: Segment results by city tier if possible. The conversion lift from speed improvements is larger in Tier 2/3 cities than metros.

Note: This test is most valuable if your current GTmetrix/PageSpeed score on mobile is below 75. Check before building this variant.

Test 5: WhatsApp CTA vs. Form Fill

The problem: Email-based lead forms convert poorly for high-consideration purchases in India. Indian buyers increasingly prefer to have conversations over WhatsApp before committing.

The test:

- Control: Standard lead form (Name, Email, Company, Submit)

- Variant: WhatsApp CTA (“Chat with us on WhatsApp → [link]”) as the primary conversion action

Expected impact: Higher volume of top-of-funnel conversations; qualification rate depends on your follow-up process

India-specific context (upgrowth.in, 2026): WhatsApp-driven traffic converts 3–5x higher than organic search for high-consideration purchases in India. For SaaS demos, the WhatsApp initial touchpoint often shortens the sales cycle by allowing real-time Q&A.

Implementation: WhatsApp Business API with a pre-filled message (“Hi, I want to learn more about ClickVariant for [my startup]”). ClickVariant’s redirect experiment feature can test form vs. WhatsApp as the primary CTA without code changes.

Mobile-First Testing Setup: Step by Step

If you’re starting a mobile A/B testing programme from scratch:

Step 1: Audit your current mobile vs. desktop conversion rate gap

Pull your conversion rates by device type in Google Analytics. Most Indian startups find desktop converts 2–3x higher than mobile. The size of that gap tells you how much opportunity exists.

Step 2: Pick your highest-traffic mobile page

Use GA4 with device segment filter. Usually it’s the homepage, a top landing page, or product page.

Step 3: Run a heatmap on the mobile version

Where do users tap? Where do they stop scrolling? What elements get missed entirely on mobile? ClickVariant’s heatmap feature shows this for mobile and desktop separately.

Step 4: Form your hypothesis

“If we [change X], then [mobile conversion metric] will improve because [reason related to mobile user behaviour].”

Step 5: Build the variant targeting mobile only

In ClickVariant, use screen width targeting to show variants only to mobile visitors (width ≤ 768px). This way your desktop experience is unaffected.

Step 6: Run until significance

Mobile traffic in India can be high-volume, which means tests can reach significance faster. ClickVariant’s sample size calculator will tell you how many visitors you need.

What Not to Test on Mobile (Common Mistakes)

Don’t test desktop elements on mobile: Navigation patterns, hover states, sidebar layouts don’t translate to mobile — test elements that are actually visible and interactive on the mobile version.

Don’t run desktop + mobile in the same test without segmenting results: A test that wins on desktop but loses on mobile (or vice versa) will show as neutral in aggregate. Always segment results by device.

Don’t test on low-traffic pages: Mobile-specific tests need mobile visitors to reach significance. If you have fewer than 5,000 monthly mobile visitors on a page, you’ll need 4–6 weeks to see results — focus on high-traffic pages first.

The Benchmark: What Good Mobile Conversion Looks Like in India

| Industry | Current Median (India mobile) | Top 10% (India mobile) |

|---|---|---|

| D2C product page (add to cart) | 1.5–2% | 4–6% |

| SaaS sign-up page | 2–3% | 6–9% |

| EdTech trial/enroll | 3–5% | 8–12% |

| Fintech app download | 8–10% | 18–22% |

If you’re at median, you’re leaving 2–4x conversion improvement on the table — all from testing, not from rebuilding.

Start Mobile A/B Testing Today

ClickVariant lets you target experiments specifically to mobile visitors, run device-specific heatmaps, and reach statistical significance faster on high-traffic Indian mobile pages.

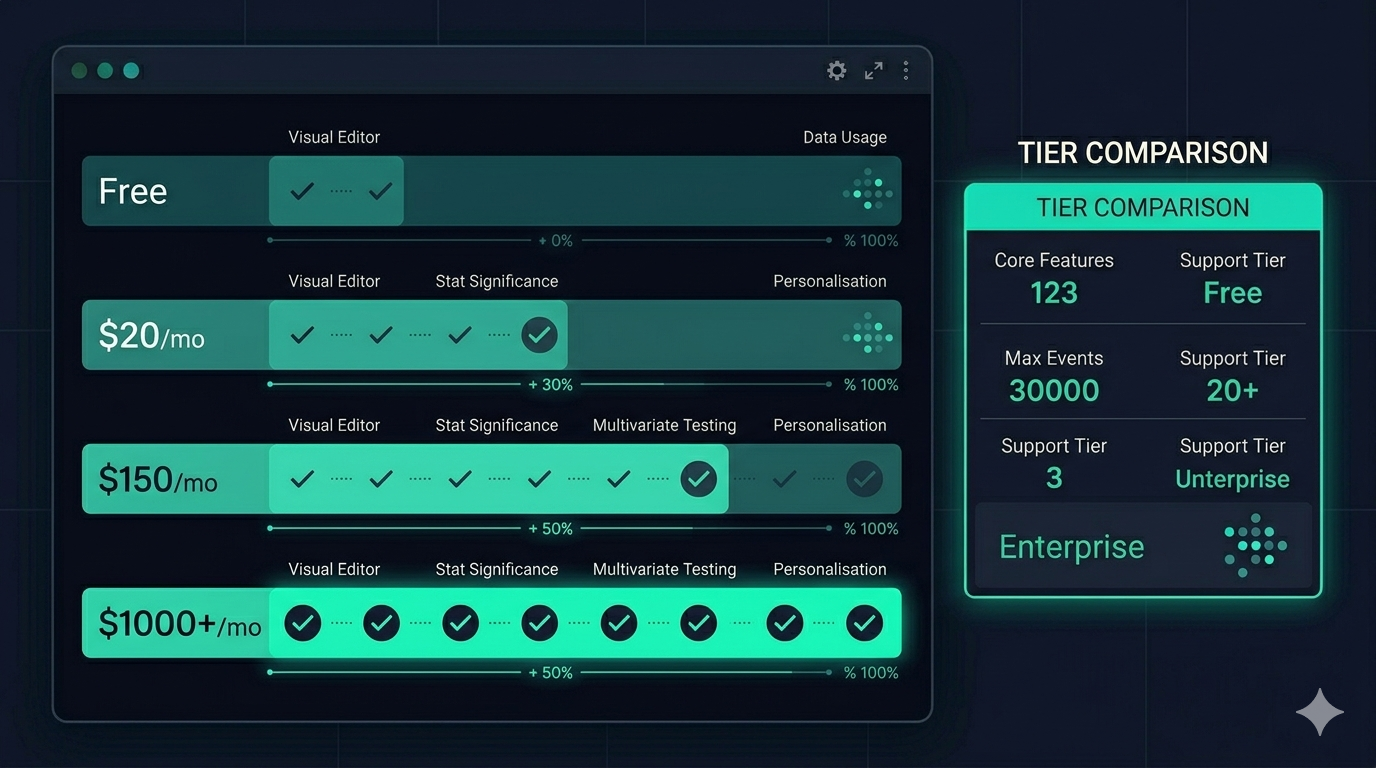

$20/month. No developer required for most tests. First mobile experiment live in under an hour.