Visual A/B Testing: Run Experiments Without Writing a Single Line of Code

Visual A/B testing lets you test changes on any page without a developer. Here's how it works, when to use it, and the best visual A/B testing tools in 2026.

Every growth team eventually hits the same wall: you have a hypothesis, you know what to test, but you can’t run the experiment without opening a developer ticket. The developer is busy. The ticket sits in the backlog for two weeks. By the time the test launches, the campaign it was meant to support is over.

Visual A/B testing breaks this cycle.

With a visual editor, you click the element you want to change, make the change directly in the browser, and launch the experiment — no code, no ticket, no waiting.

This guide explains how visual A/B testing works, what it can and can’t do, and which tools do it best in 2026.

How Visual A/B Testing Works

A visual A/B testing tool injects a JavaScript snippet into your website. When a visitor lands on the page, the snippet:

- Assigns them to a variant (A or B) — usually via a first-party cookie

- Applies the visual change to that variant — modifying the DOM (the page’s HTML structure) after the page loads

- Tracks whether that visitor converts on your goal (button click, form submit, page visit)

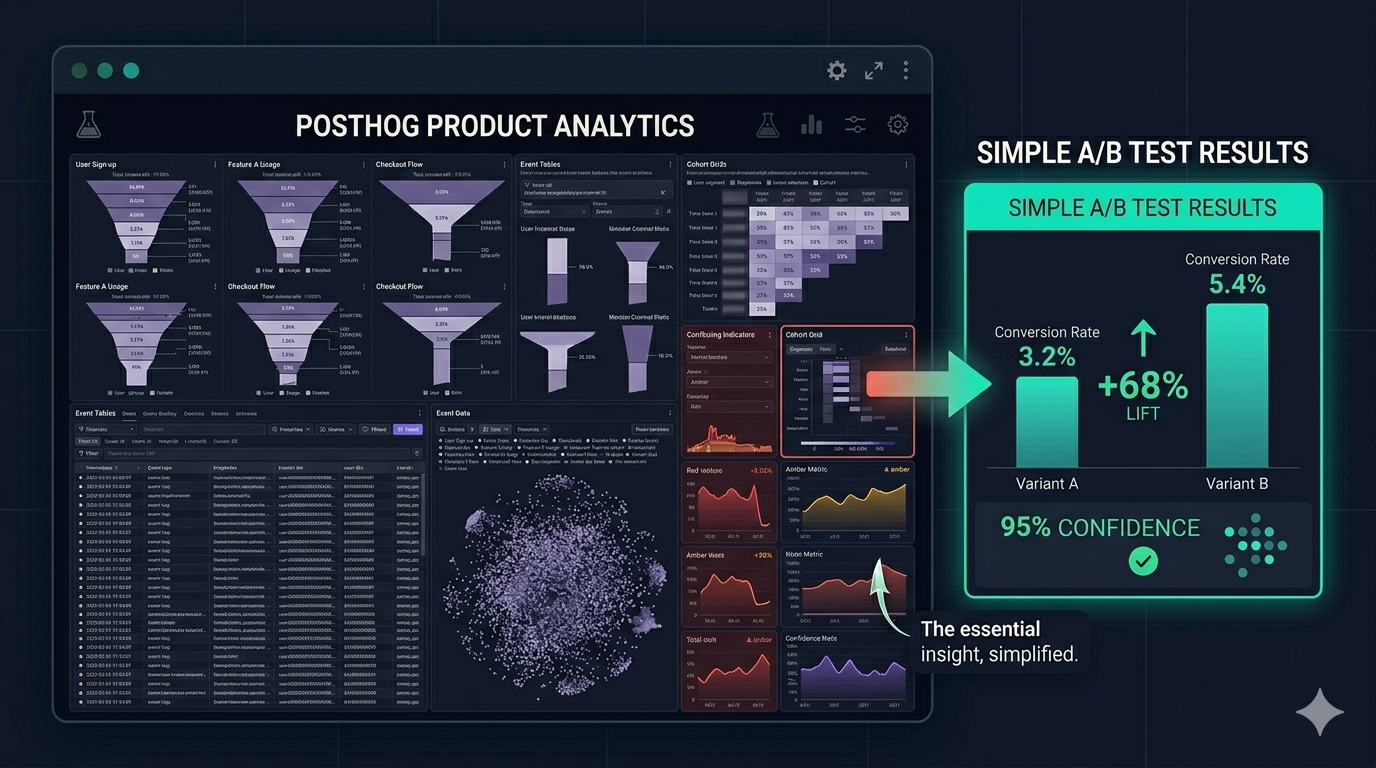

- Reports results with statistical significance

The visual editor is the front-end interface that lets you create variants by pointing and clicking. You select a headline, change the text. You select a button, change the colour. You rearrange sections without touching your codebase.

The key technology: Most visual editors work by modifying the rendered DOM after the page loads (client-side rendering). This is the easiest approach but can cause a brief “flicker” — a moment where the original version is visible before the variant snaps into place. Good tools minimise this through pre-loading techniques.

What Visual A/B Testing Is Good For

Visual testing is the right approach for:

Copy changes — Headlines, CTA button text, subheadings, body copy. These are the highest-leverage, lowest-risk changes in any testing programme. Change a headline in 30 seconds, not 2 days.

Layout adjustments — Moving a CTA above the fold, changing the order of page sections, removing navigation items on landing pages.

Colour and style changes — Button colours, background colours, font sizes. Low development effort, measurable conversion impact.

Image swaps — Testing a product photo vs. a lifestyle image, or different hero illustrations.

Form field reduction — Removing optional form fields, changing field order, testing required vs. optional labels.

Social proof placement — Testing whether testimonials above vs. below the CTA affect conversion.

All of these are changes that would traditionally require a developer to implement in code. With a visual editor, a marketer or growth manager does them directly.

What Visual A/B Testing Can’t Do

Honest answer: there are tests that visual editors handle poorly.

Personalisation at scale — If you want to show different content to different audience segments based on behaviour, CRM data, or company firmographics, you need server-side testing. Visual editors work with page-level rules, not user-level data.

Structural page changes — Completely redesigning a page, changing the navigation structure, or testing a fundamentally different page layout often requires custom code even with a visual editor.

Testing server-rendered content — If your page is rendered on the server (SSR frameworks like Next.js, Nuxt, or Rails), visual changes applied client-side can still flicker or conflict with server-rendered state. Server-side testing is cleaner for these stacks.

Mobile app testing — Visual editors work on websites. For mobile apps (iOS/Android), you need SDK-based testing with your mobile development team.

High-performance pages — Pages where every millisecond of load time matters (e-commerce checkout, high-traffic landing pages) may see performance impacts from client-side DOM manipulation. Server-side rendering the variant is cleaner in these cases.

The 4 Best Visual A/B Testing Tools in 2026

1. ClickVariant — Best Value for Startups and Growth Teams

Visual editor quality: Click-to-edit, drag-and-drop, works on any website built on any stack

Price: Free tier (full analytics), $20/month Pro, $99/month Pro Plus

What makes it stand out for visual testing:

- No flicker technique — variant loads with minimal DOM shift

- Point-and-click element selection — click any text, image, or button to change it

- Multi-variant support — test A vs B vs C without code

- Works on Shopify, WordPress, Webflow, Next.js, custom HTML — anything with a

<head>tag - India/SEA friendly — INR pricing at ₹1,650/month

Best for: Small to mid-size growth teams who need to run visual experiments without developer involvement. Particularly strong for India/SEA market teams.

Try ClickVariant free at clickvariant.com

2. VWO — The Established Enterprise Choice

Visual editor quality: Feature-rich, mature, includes heatmaps and session recordings alongside testing

Price: Starts at $314/month after the AB Tasty merger (2026)

Visual testing strengths:

- Comprehensive visual editor with advanced CSS editing

- Split URL testing (redirect entire pages to different URLs)

- Heatmaps integrated with the testing workflow

Limitation: Price point has moved significantly upward post-merger. Not accessible for early-stage teams.

3. Convert.com — Best for Privacy-First Teams

Visual editor quality: Good visual editor, GDPR-compliant, works cookieless

Price: From $99/month

When to pick Convert:

- You’re in Europe or running under strict GDPR/PDPA compliance

- You need dedicated privacy documentation for enterprise contracts

- You’re an agency managing multiple client accounts

4. AB Tasty — Now Enterprise-Only

Status: Merged with VWO in January 2026. Pricing now requires a sales call. Not viable for startups or SMBs.

Setting Up Your First Visual A/B Test: Step by Step

Here’s how to run your first visual experiment in ClickVariant from zero:

Step 1: Install the snippet

Copy your ClickVariant JS snippet from Settings → Installation. Paste it inside your website’s <head> tag. Verify it’s working (Settings → Installation → Verify).

Step 2: Choose your page Start with your highest-traffic page — usually your homepage, pricing page, or primary landing page. Visual testing needs traffic to reach significance. Start where you have the most.

Step 3: Open the visual editor In ClickVariant, create a new experiment and enter your page URL. The visual editor opens your live page in an iframe.

Step 4: Make your change Click any element on the page. A toolbar appears with options:

- Edit text

- Change styles (colour, font, size)

- Move the element

- Hide the element

- Rearrange

For your first test: change your hero headline. Make variant B say something different — more specific, more outcome-focused.

Step 5: Set your goal Click the conversion goal field. Select the button or form submission you want to track. ClickVariant tracks when visitors in each variant reach that goal.

Step 6: Set traffic allocation Default: 50/50 split. Leave it unless you have a reason to weight differently.

Step 7: Launch Click Start. ClickVariant begins splitting traffic and tracking conversions.

Step 8: Wait for significance Check the sample size calculator — ClickVariant shows you how many more visitors you need before the result is reliable. Don’t stop early.

Common Visual A/B Testing Mistakes

Testing too many elements at once: If you change the headline AND the image AND the CTA in the same test, you don’t know which change drove the result. One change per test.

Stopping when you see a lead: If variant B is winning 55/45 after 300 visitors, that’s noise. Wait for statistical significance (typically 95% confidence) before declaring a winner.

Testing on low-traffic pages: Visual tests on a page with 200 visitors/month take months to reach significance. Test your highest-traffic pages first.

Making the variant too different: A completely different page design vs. a single headline change are different tests. Keep early tests focused — one element, one hypothesis.

The Business Case for Visual Testing

Before visual editors existed, every A/B test required a developer sprint. A single experiment might take 2 weeks to go live. At 5 tests per quarter, you’d run 20 tests per year.

With a visual editor, a marketer can launch a test in under an hour. At 5 tests per month, you run 60 per year. At ClickVariant’s conversion rate improvement averages, that’s 3x more data-driven product decisions annually — from the same team.

The compound effect of running more experiments, faster, is the real value of visual A/B testing.